“What do you know about R&D tax credits?”

I was demoing some of our newest features to a F100 engineering leader when he asked me this question. Here’s what I was showing him:

Little did I know I was about to go down a rabbit hole. What started as a product demo turned into a conversation about how much of his team’s work quietly qualifies as research and development (R&D) and how little of that story they’d been able to tell.

R&D Tax Credits 101

R&D tax credits are a way for a governing body, like the United States government, to foster innovation by allowing organizations to write off R&D expenses. For software development, this involves characterizing engineering effort that is related to R&D and sharing those estimates with the finance team to justify the write-off. Most engineering leaders do this since it’s “free money,” but the process has always been difficult, and now perhaps more so with the new pressures on the role in the age of AI.

If you’ve only heard R&D credits described as “free money,” here’s the actual test the IRS uses under Internal Revenue Code §41 to decide what qualifies. Every project has to clear all four of these hurdles:

1. Technological in nature

The work has to rely on principles of computer science, engineering, or other hard sciences. This is exactly the work your software and artificial intelligence (AI) teams do every day: system design, distributed architectures, model integration, performance engineering.

2. Elimination of uncertainty

There must be real technical unknowns at the start: can we make this scale, is this architecture viable, will this model hit the latency target? If engineers are asking whether they can make something work rather than when something can be shipped, you’re in the right territory for R&D tax credits.

3. Process of experimentation

You need a systematic way of testing options: prototyping, A/B testing, modeling, iterating on different designs or algorithms to resolve that uncertainty. In practice, that’s what shows up as branches, spikes, refactors, and multiple PRs as teams try and discard different approaches.

4. Develops or improves a business component

The work must create or improve a product, process, technique, formula, or software with better functionality, performance, reliability, or quality. New features, major re-architectures, performance overhauls, or hardening an AI-powered workflow all qualify more readily than routine break-fix work.

For software and AI-heavy teams, that includes new algorithm development, new service or data architectures, scaling an AI feature into production, or optimizing AI-generated code for performance and reliability. These are the patterns that emerge when teams lean into code generation and still need to keep the codebase healthy at AI speed, as the tradeoffs of AI-generated code make clear.

Between federal and state programs, many companies can effectively recover between 10–20% of their qualified R&D spend (mostly engineering wages, some cloud and contractor costs). For a mid-sized engineering org, that can translate to hundreds of thousands of dollars per year in credits, which may be enough to more than cover the cost of their AI tooling stack.

Nobody Loves the Process

Most engineering leaders already participate in R&D credit studies because it’s effectively free money, but the burden of the process falls disproportionately on their teams. Proper claims require detailed breakdowns of who worked on what, interviews, spreadsheets, and mapping real work back to tax definitions, which can consume hundreds of hours across engineering, finance, and outside advisors. To feed those studies, teams are usually asked to time-track, fill out surveys, or tag tickets in Jira, all of which interrupt flow and feel like low‑value bureaucracy to the engineers doing the actual R&D work.

On top of that, drawing neat lines between qualified R&D feature work and non‑qualified maintenance and bug fixes is hard in real codebases, so leaders end up debating labels instead of shipping software. And they know that if the documentation looks fuzzy, an auditor can challenge or deny the credits.

Back To The Demo

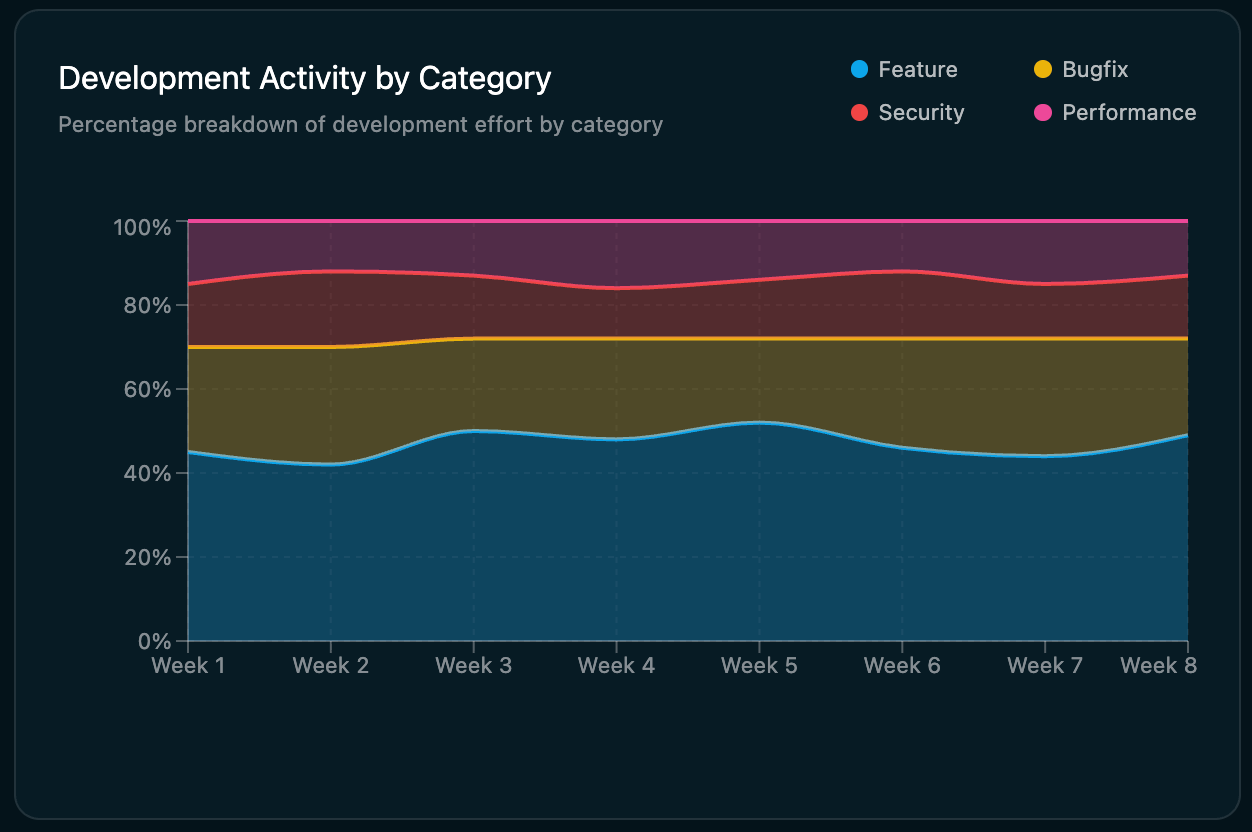

I explained that we generated the data in the chart by evaluating the code in each PR and characterizing the nature of the work: feature vs bugfix, and so on. I thought it was cool simply because organizations always want more features, but getting the data isn’t easy. “State of the art” absent a feature like this involves tagging Jira tickets, applying a GitHub PR naming convention, or some other derivative. Doing it based on the actual code meant it was easier (zero work) and more accurate (not subject to human error).

The engineering leader liked our approach for all of these reasons. But the reason he brought up R&D tax credits is that data like this is used to justify them. In practice, feature work is the agreed upon proxy for R&D. Because our approach required no work (for example, tagging Jira tickets), was based on the code, and could easily be universal, he thought his finance team could justify a higher credit. And, his org would have all “the receipts” to prove the work was for R&D since the data was based on every PR, by every dev, across every repo and time period, with a clear breakdown of feature vs. maintenance work.

Improved Velocity, Accelerated Feature Delivery, For Free

I hear this routinely now in my conversations with engineering leaders. Specifically, that there’s an opportunity to improve velocity and in particular drive the delivery of more features by leaning into code generation, especially as many teams are already grappling with the AI‑driven explosion of code volume. And by making it easy to prove this with real data, you can justify enhanced R&D tax credits. Many are then associating the write-off with the cost of the tooling (like their code gen platforms and Flux), thereby delivering these improvements for free.

See how Flux can classify every PR, by every dev, across your repos so you can defend bigger R&D credits with real receipts. Request a demo.

Ted Julian is the CEO and Co-Founder of Flux, as well as a well-known industry trailblazer, product leader, and investor with over two decades of experience. A market-maker, Ted launched his four previous startups to leadership in categories he defined, resulting in game-changing products that greatly improved technical users' day-to-day processes.